The Addendum Nobody Writes

There's a document in radiology that everyone knows about and nobody talks about. It's not a breakthrough paper or a new protocol. It's a correction slip — the addendum — and it represents something the profession would rather forget existed. This document doesnt need to exist anymore dut to AI.

Radiologists are extraordinarily good at their work. They spend years learning to see what others cannot: the shadow that doesn't belong, the margin that should be sharper, the density that tells a story. They interpret thousands of images. They carry enormous responsibility on relatively quiet shoulders. There is also Burnout!

And yet, somewhere in every radiology department, in every country, in every language, reports go out with errors that should never have left the building.

Not missed diagnoses. Not subtle findings that could challenge anyone. We're talking about the report that says "left" when the lesion is on the right. The report where the conclusion doesn't mention the critical finding buried in the findings section. The report where the measurement reads "__ cm" because the number was never filled in. The report written for a uterus that belongs to a patient who doesn't have one.

These are not cognitive failures. These are not the tragic, complex perceptual errors that fill academic literature. These are mechanical errors — the kind that happen when a human being is asked to perform a task at a pace and volume that no human being was designed to sustain.

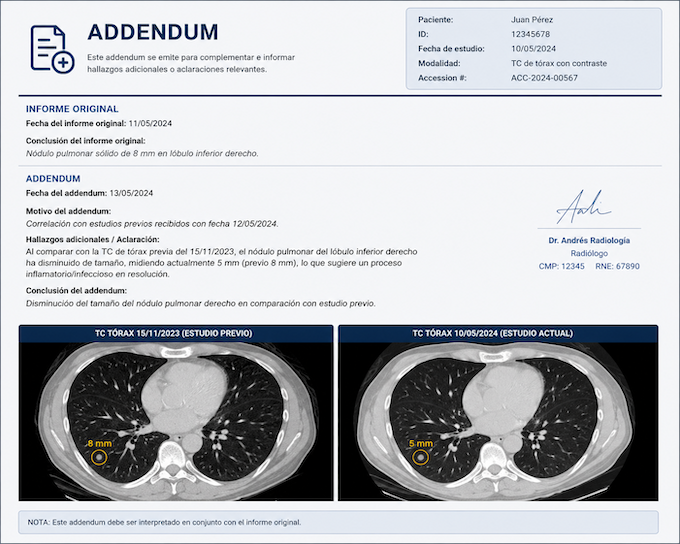

And for each one, somebody has to write an addendum.

The Addendum Is a Symptom, Not the Problem

Most conversations about radiology errors go immediately to the big picture: cognitive bias, perceptual failures, the limits of human attention under fatigue. These are real and worth discussing. But they've become so dominant in the literature that a quieter, more tractable problem has been largely ignored.

A radiologist interpreting one image every three to four seconds — as studies have documented — is not making thoughtful decisions about every template field. They are dictating, correcting, moving, dictating again. Speech recognition systems introduce words that don't exist in medical vocabulary. Comparative studies go unmentioned because there's no moment to pause and verify prior imaging exists. A finding is described in precise anatomical detail in the body of the report and then simply... not carried into the conclusion.

These errors are preventable. Not reducible. Not manageable. Preventable.

And the addendum — that small, somewhat embarrassing document — is how we've historically handled them. A radiologist is notified. They review. They issue a correction. The corrected report is sent. Time has passed. Decisions may already have been made.

The addendum is the profession's way of catching what slipped through. It works, in the way that a bucket under a leaking roof works. It addresses the water on the floor without addressing the leak.

What AI Can Actually Do Here

There is no shortage of articles explaining what AI might do for radiology — replace radiologists, read every CT simultaneously, eliminate the need for human interpretation altogether. The discourse tends toward either utopian replacement or defensive dismissal.

Both miss the point.

The genuinely interesting question is narrower and more practical: what if AI could read every report as it is being written and catch the mechanical errors before the report leaves the system?

Not interpret the images. Not make the diagnosis. Not replace the radiologist's judgment. Tex only!! Simply read the text of the report — just the body, without any patient data — and verify a checklist that the human brain, under pressure, sometimes skips.

Is the laterality in the conclusion consistent with the laterality in the findings? Was a measurement entered where the template required one? Does the clinical question posed in the referral appear somewhere in the conclusion? Is contrast media mentioned if the study required it? Is there an organ referenced that conflicts with the surgical history documented in the clinical summary?

These are not judgment calls. They are consistency checks. They are the kind of verification that a very attentive resident might perform — if they had time, if they were in the room, if they were reviewing every single report before it went out.

AI can be that resident. For every report. Every time.

Zero Addendums Is Not an Aspiration. It's an Engineering Problem.

The errors that generate addendums are almost entirely classifiable. Researchers have identified and categorized them in detail: wrong laterality, wrong organ, empty measurement fields, mismatched anatomy between findings and conclusion, findings not carried into the conclusion, clinical question unaddressed, identical text repeated between sections, discrepant classification grades.

Each of these is detectable programmatically. Not with complex deep learning models analyzing pixel arrays — with language models reading text, understanding structure, and flagging inconsistencies.

The architectural model is straightforward: a report is generated. Before it is signed and released, the body of the report — stripped of all identifying patient information — passes through an AI layer. That layer returns a structured analysis: here is what appears inconsistent, here is what appears missing, here is what appears contradictory between findings and conclusion.

The radiologist sees this analysis before signing. Not a rejection of their work. Not a second-guessing of their diagnosis. A quick-read quality check, the kind that takes seconds to review, that catches what fatigue or volume or dictation error introduced.

The report that goes out is the corrected version. No addendum necessary.

This is not a radical reimagining of radiology. It is the same process that exists now, minus the latency. Instead of catching the error after the report is released, you catch it before. The addendum doesn't disappear because standards changed or radiologists became more careful. It disappears because the system no longer allows the report to leave before basic consistency has been verified.

The Human Factor Doesn't Go Away — It Gets Protected

There is a version of this conversation that radiologists rightly resist: the framing that errors are a human failure that technology must correct. That version is both inaccurate and counterproductive.

The errors we're describing are not the result of radiologists being careless. They are the result of a system that has scaled image volume without scaling the conditions under which radiologists work. A professional asked to interpret thousands of images per shift, managing dictation systems, prior imaging review, critical result communication, and report formatting simultaneously, is going to have reports leave their desk with mechanical inconsistencies. This is not a character flaw. It is a predictable output of an impossible input.

What AI introduces into this equation is not oversight of the radiologist. It is protection of them.

The radiologist who dictates "right" when they mean "left" because they've been reading for six hours is not a worse radiologist than they were at hour one. They are a radiologist who needed a system that could catch that particular error at that particular moment. The AI layer is not there to judge — it is there to function as the colleague who reads over your shoulder and says, quietly, before you hit send: "Hey — did you mean the other side?"

That colleague, historically, has not existed at scale. Now it can.

The Standard Should Be Higher Than "We Caught It"

In most industries, catching an error after delivery is not considered a quality system. In radiology, the addendum has been normalized as an acceptable part of the workflow — evidence that the system works, that errors are identified and corrected.

But an addendum means a report with an error was released. It means a clinician read something incorrect. It means a patient's care, for some window of time, was based on wrong information.

The standard the profession should hold itself to — and that technology now makes achievable — is not "we corrected it." It is "it never went out wrong."

That is not a utopian standard. It is an engineering one.

Zero addendums from mechanical report errors is not the elimination of human fallibility. It is the intelligent deployment of a layer that exists for one purpose: to ensure that what the radiologist meant to say is, in fact, what the report says.

Radiologists are not failing at their jobs. The systems around them are failing to protect the work they do.

That is the problem AI should be solving. And it can.

The errors that generate addendums are classifiable, detectable, and preventable. What changes is not the radiologist — it's the moment of intervention. The era of addendum has come to an end with AI!